How I Shipped 25,000 Lines of Code in 3 Days With Zero Manual Coding

I ran two AI sessions in parallel — one as PM, one as dev team — and shipped a full product in a weekend. Here's the architecture, what broke, and what it means for building software.

On Tuesday afternoon I opened a doc with 19 pieces of team feedback about our product. Clinicians hated the post-call workflow. The voice agent sounded wrong. Cards couldn't be reordered. I sat down with one AI session and spent an hour turning that feedback into architecture decisions. By 3pm, 18 tickets were in Linear with dependency chains. By 3:01pm, a second AI session had picked them up, spun out agents into parallel git worktrees, and started building. By 6pm, eight features were live on staging — including a 1,187-line dashboard panel I never touched. I reviewed the PRs, merged them, and went to dinner.

Over three days, this pipeline shipped 25,000 lines across 39 tickets. I wrote none of the code. I wrote every spec.

The architecture

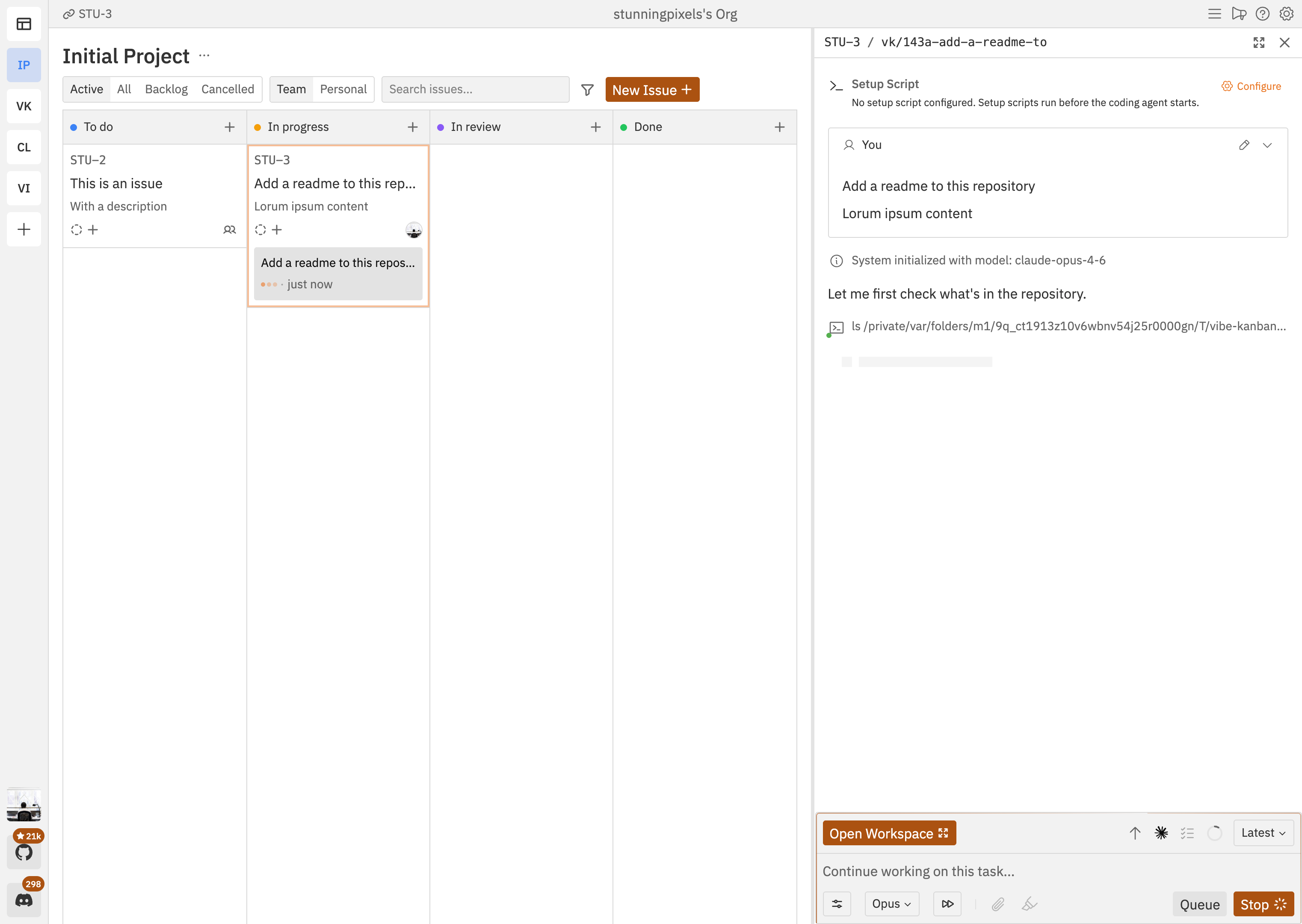

The PM session is an interactive Claude Code conversation where I talk through product direction. It doesn't write code. It writes tickets — specs with file paths, line numbers, acceptance criteria, dependency chains — and pushes them into Linear. The dev session is a separate agent running Cline in kanban mode. It pulls tickets from Linear, spins up sub-agents in isolated git worktrees, and ships PRs. I never interact with the dev session. It watches the board and executes.

The separation matters because context windows have a carrying capacity. When I tried running PM and dev in the same session, the product conversation degraded as implementation details piled up. The PM started suggesting code patterns instead of questioning whether the feature was right. Two sessions means the PM holds the full product context — user research, design rationale, architecture — and the dev holds the full codebase. Linear sits between them. I make decisions on one side and review PRs on the other.

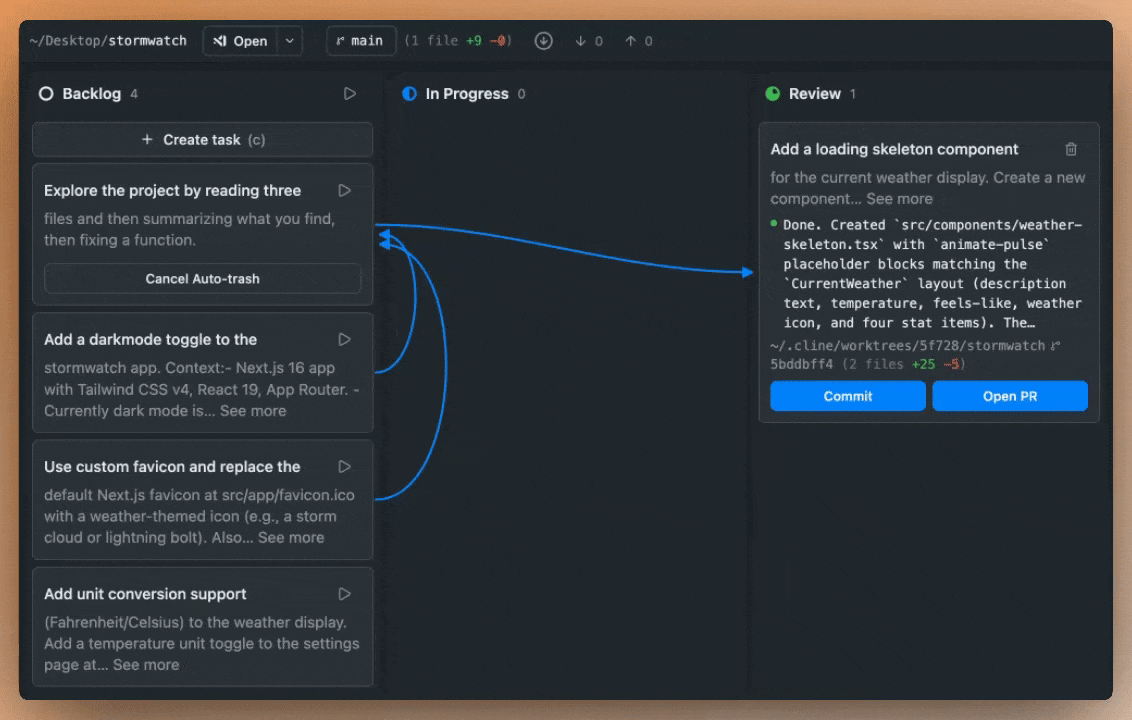

This is the dev session's interface — Cline Kanban. Each card is a task with its own terminal and isolated git worktree. Blue lines are dependency chains. When a card finishes, its dependents auto-start. The Review column shows a completed agent ready for me to commit or open a PR.

What this looks like in practice

Day one: infrastructure. OAuth integrations, CI pipeline, test harnesses, API clients. I talked through the system boundaries with the PM session; it wrote a ticket for each one. Dev agents built them in parallel — each in its own worktree, no merge conflicts, no coordination overhead. 38 commits by midnight. Day two: stabilize and release. Bugs from the rapid build got triaged into fix tickets with exact file paths and line numbers. When you tell an agent precisely where the problem is, it doesn't waste half its context window hunting.

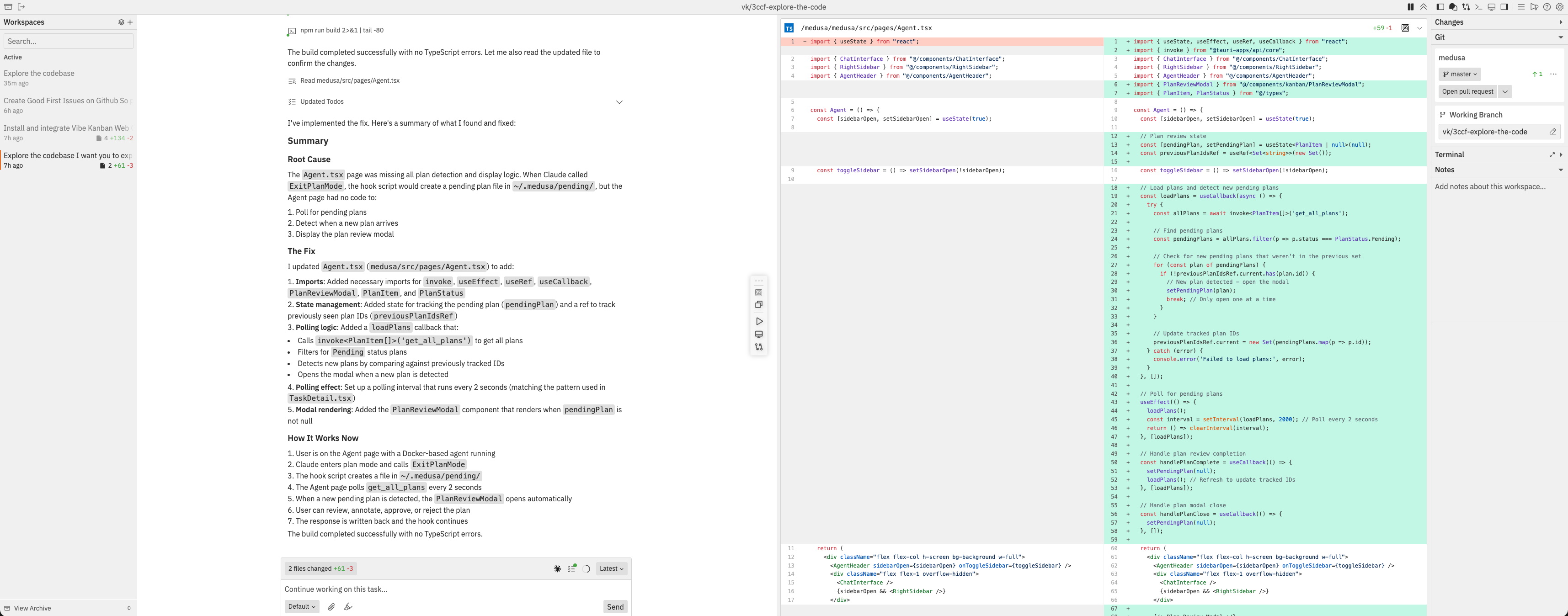

Day three is where it got interesting. We'd accumulated 19 pieces of product feedback — UX complaints, feature requests, workflow gaps. I spent an hour with the PM session turning that feedback into a new UX architecture: a slide-over panel to replace page navigation, a restructured scripts system, drag-and-drop kanban reordering. The PM session decomposed the architecture into 18 tickets with dependency chains and pushed them to Linear. Dev agents started immediately — two tracks in parallel, voice and configuration on one, UI and dashboard on the other. The biggest deliverable was a 1,187-line slide-over panel with four tabs. I described the behavior in the ticket. The agent read the existing 1,700-line calls page, extracted the relevant sections into a new component, wired up tab switching and animation, and opened a PR with passing tests. I reviewed the diff, approved it, and moved on. The whole feature took about 40 minutes from ticket to merged PR.

What I learned

The agents that shipped clean code on the first pass got tickets with file paths, line numbers into existing code, and concrete acceptance criteria. The agents that flailed got vague descriptions and burned their context window exploring the codebase. Ticket quality is agent quality. I spend about 80% of my time writing specs now. The code writes itself. The specs don't.

Dependency chaining turned a serial queue into parallel execution. Without it, I'd finish one task, notice, then manually kick off the next. With chains defined in Linear, Track A and Track B ran simultaneously, and downstream tasks fired the moment their prerequisites landed. Eighteen tickets across two parallel tracks took about three hours of wall-clock time. Sequentially, that's a week.

There's a ceiling around 500 lines per task. Beyond that, agents start to degrade — hallucinating function signatures, losing track of which file they're editing, redoing work they already finished. The 1,187-line slide-over panel worked, but it was the upper bound. Anything bigger needs to be split into chained subtasks with commit checkpoints between them.

The weakest link is the handoff. Every manual step between "PM creates ticket" and "dev picks it up" — copying descriptions, reformatting specs, re-tagging priorities — loses information. Both sessions read and write Linear's API directly, which eliminates most of the loss. But ticket IDs still get mistyped. Statuses go stale. The handoff is where I'm losing the most throughput, and it's the next thing I'm automating away.

Where this goes

Three days, one person, 25,000 lines. That's today, with a pipeline I stitched together in a few weeks. And I'm not the only one building this way. Vibe Kanban, the YC-backed orchestrator with 14,000+ GitHub stars, gives you a full kanban UI with diff review built into each card. Gastown, Steve Yegge's framework, runs 20-30 parallel agents with a "Mayor" that coordinates them and an immutable decision log he calls "beads." Conductor wraps Claude Code in a native Mac app with agent roles — Researcher, Builder, Reviewer — and four coordination patterns. Claude Code itself now has native Agent Teams, experimental but shipping. The ecosystem is exploding because everyone hit the same wall: one agent is fast, but orchestrating many is where the real leverage is.

The constraint right now is me — I can only review PRs so fast, and I can only hold so many product threads in my head at once. Boris Cherny, who built Claude Code, recommends 3-5 parallel worktrees. I ran 2 tracks this sprint. The ceiling is rising every week.

Here's what the next twelve months look like. The PM agent gets good enough that I check in twice a day instead of staying in the conversation. The dev agents get reliable enough that I batch-review PRs instead of watching them land. The handoff becomes fully automated — ticket created, agent assigned, PR opened, CI green, merged — with me as an async reviewer who intervenes only when something looks wrong. One founder, shipping what used to take a ten-person team. Not because the code got simpler. Because the coordination cost collapsed.

The skill that matters now is management — speccing work, structuring dependencies, reviewing output, maintaining quality across a fleet of agents. The founders who learn to manage machines will build faster than the ones still trying to be the machine. The bottleneck moved. Move with it.